Single-photon 3D imaging with deep sensor fusion

David B. Lindell, Matthew O'Toole, Gordon Wetzstein

Photon efficient 3D imaging using single-photon detectors and deep neural networks.

Video

Abstract

Sensors which capture 3D scene information provide useful data for tasks in vehicle navigation, gesture recognition, human pose estimation, and geometric reconstruction. Active illumination time-of-flight sensors in particular have become widely used to estimate a 3D representation of a scene. However, the maximum range, density of acquired spatial samples, or overall acquisition time of these sensors is fundamentally limited by the minimum signal required to estimate depth reliably.

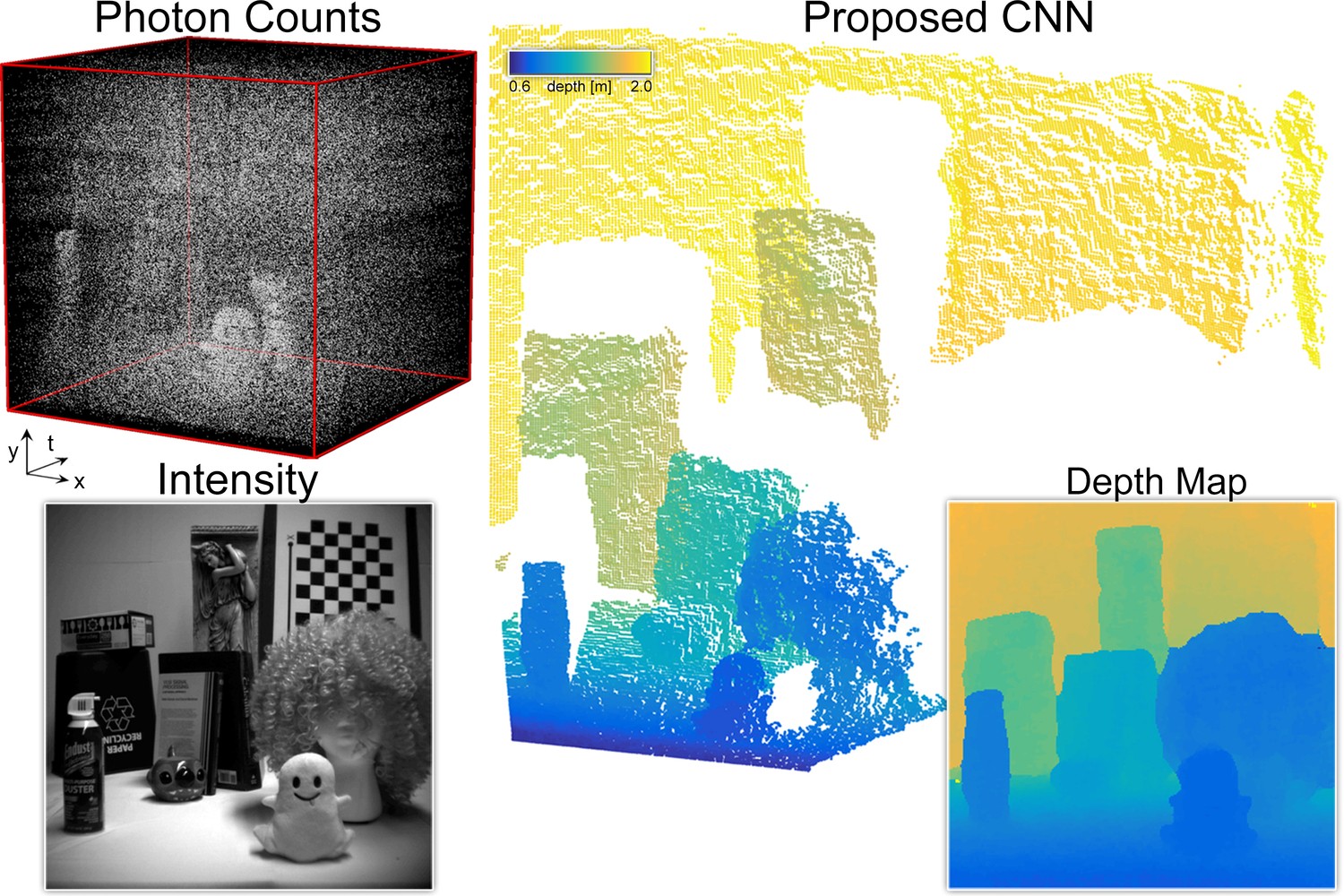

In this paper, we propose a data-driven method for photon-efficient 3D imaging which leverages sensor fusion and computational reconstruction to rapidly and robustly estimate a dense depth map from low photon counts. Our sensor fusion approach uses measurements of single photon arrival times from a low-resolution single-photon detector array and an intensity image from a conventional high-resolution camera. Using a multi-scale deep convolutional network, we jointly process the raw measurements from both sensors and output a high-resolution depth map. To demonstrate the efficacy of our approach, we implement a hardware prototype and show results using captured data. At low signal-to-background levels our depth reconstruction algorithm with sensor fusion outperforms other methods for depth estimation from noisy measurements of photon arrival times.

Slides

Overview

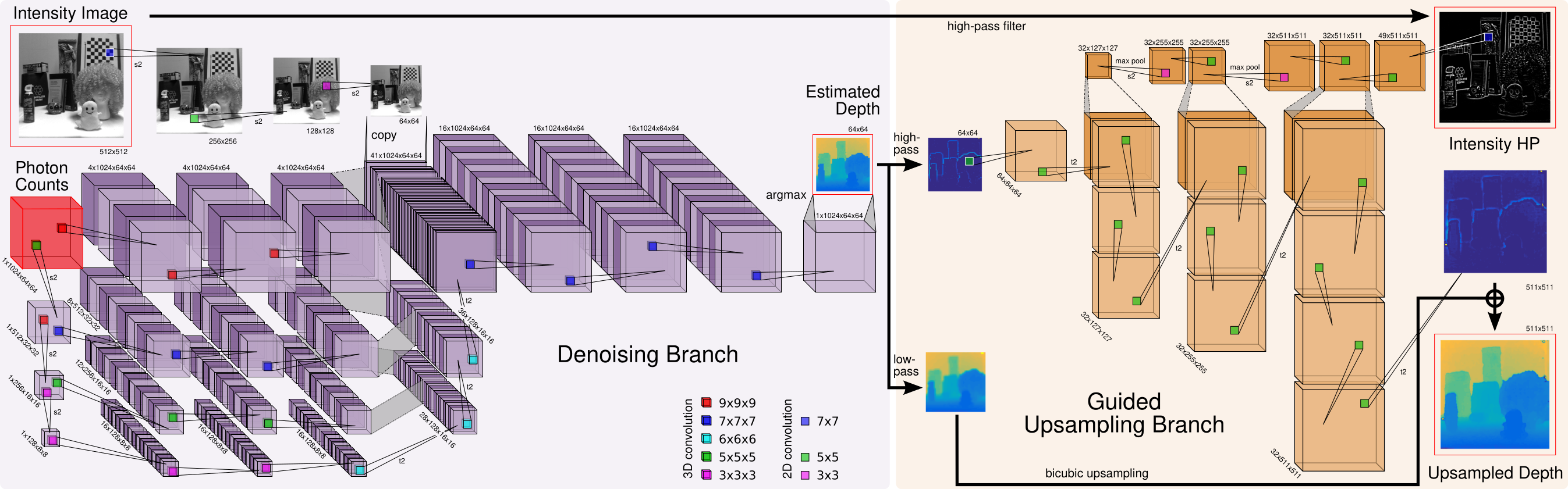

Convolutional Neural Network architecture for depth estimation and image guided upsampling from captured photon counts and intensity image.

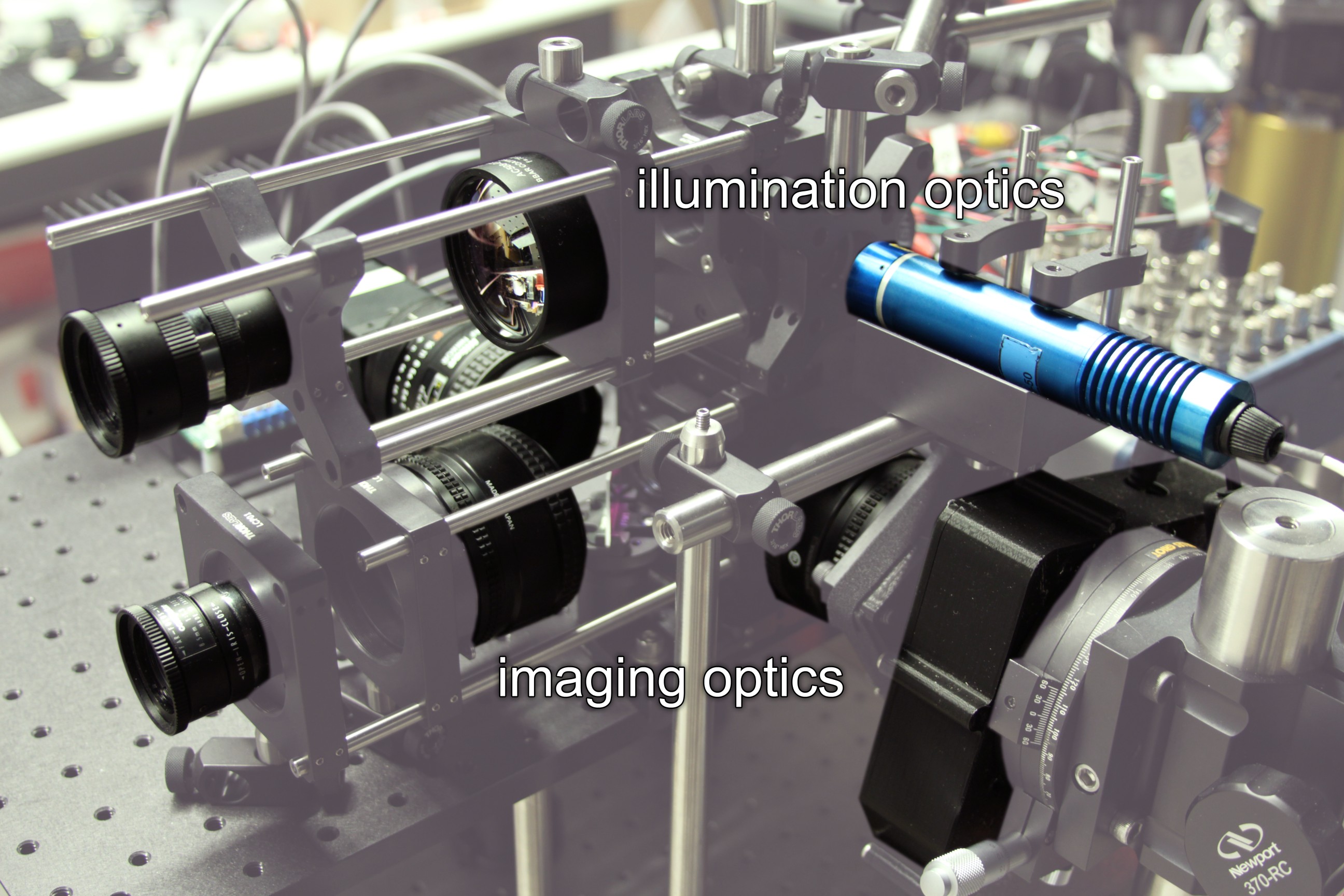

Single-photon imaging prototype showing the imaging optics and illumination optics.

Acknowledgments

This project was supported by a Stanford Graduate Fellowship, a Banting Postdoctoral Fellowship, an NSF CAREER Award (IIS 1553333), a Terman Faculty Fellowship, a Sloan Fellowship, by the KAUST Office of Sponsored Research through the Visual Computing Center CCF grant, the Center for Automotive Research at Stanford (CARS), and the DARPA REVEAL program. The authors are grateful to Edoardo Charbon, Pierre-Yves Cattin, and Samuel Burri for providing the LinoSPAD sensor used in this work and continued support of it.

Citation

@article{Lindell:2018:3D,

author = {David B. Lindell and Matthew O’Toole and Gordon Wetzstein},

title = {{Single-Photon {3D} Imaging with Deep Sensor Fusion}},

journal = {ACM Trans. Graph. (SIGGRAPH)},

issue = {37},

number = {4},

year = {2018},

}