Reconstructing transient images from single-photon sensors

Matthew O'Toole, Felix Heide, David B. Lindell, Kai Zang, Steven Diamond, Gordon Wetzstein

Capturing and reconstructing transient images with single-photon avalanche diodes (SPAD) at interactive rates.

CVPR Spotlight Presentation

Video

Datasets

Abstract

Computer vision algorithms build on 2D images or 3D videos that capture dynamic events at the millisecond time scale. However, capturing and analyzing “transient images” at the picosecond scale—i.e., at one trillion frames per second—reveals unprecedented information about a scene and light transport within. This is not only crucial for time-of-flight range imaging, but it also helps further our understanding of light transport phenomena at a more fundamental level and potentially allows to revisit many assumptions made in different computer vision algorithms.

In this work, we design and evaluate an imaging system that builds on single photon avalanche diode (SPAD) sensors to capture multi-path responses with picosecond-scale active illumination. We develop inverse methods that use modern approaches to deconvolve and denoise measurements in the presence of Poisson noise, and compute transient images at a higher quality than previously reported. The small form factor, fast acquisition rates, and relatively low cost of our system potentially makes transient imaging more practical for a range of applications.

Results

Color Image

Transient Image

Statue of David

Fiber

Pepsi Bottle

Fruit

Foam Box

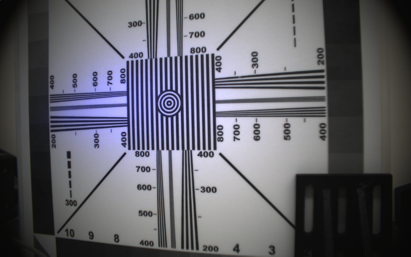

Resolution Chart

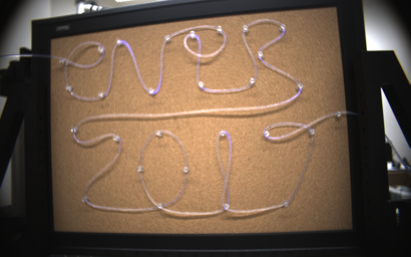

Fiber: CVPR 2017

Acknowledgments

The authors are grateful to Edoardo Charbon, Pierre-Yves Cattin, and Samuel Burri for providing the LinoSPAD sensor used in this work and continued support of it. O’Toole is supported by the Government of Canada through the Banting Postdoctoral Fellowships program, and Wetzstein is supported by a National Science Foundation CAREER award (IIS 1553333), a Terman Faculty Fellowship, a Sloan Fellowship, the Center for Automotive Research at Stanford (CARS), and by the KAUST Office of Sponsored Research through the Visual Computing Center CCF grant.

Citation

@inproceedings{OToole:2017:SPAD,

author = {M. O’Toole and F. Heide and D. Lindell and K. Zang and S. Diamond and G. Wetzstein},

title = {{Reconstructing Transient Images from Single-Photon Sensors}},

journal = {Proc. IEEE CVPR},

year = {2017},

}